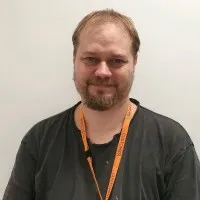

Prakash Nayak

Connect with Prakash Nayak to Send Message

Connect

Connect with Prakash Nayak to Send Message

ConnectTimeline

About me

Software Engineer.II at Zebra Technology

Education

Biju Patnaik University of Technology, Odisha

2010 - 2014Bachelor’s Degree Bachelor of Technology (BTech)Activities and Societies: Electronic and Telecomunication Engineering

Experience

Accenture

Sept 2015 - Mar 2019• Experience with working on large number of tables and large amount of data.• Experience in working in Health care domain.• Expertise in complex SQL Query writing, test case writing /execution and good understanding of database.• Experience in ETL Testing ,BI Testing and Database Testing.• Experience in analyzing and understanding the Customer Requirements and deriving the Test Scenarios/Conditions based on the Requirements.• Experience in Executing different types of system test cases based on the User requirements.• Experience in QUALITY CENTER 11.5• Experience in preparation of execution status reports and Defect reports.• Experience in Back-end Testing using SQL ..• Hands on experience in BI Report testing.• Expertise in testing enterprise dashboards• Good knowledge on Data warehouse concepts.• Experience on Oracle database.• Extensively used Workflow manager, Workflow monitor in Informatics Power Center tool.• Good exposure to UNIX.• Ability to handle multiple tasks and work independently as well as in a team Show less • Developing ETL scripts in SQL for an ongoing contracted request which requires aggregation of data from multiple comScore data resources and subsequently automating the process.• Creating /Identifying ETL Test Data for all ETL Mappings.• Testing all the phases of Extract ,Transform and Load testing i.e. right from extracting the source data and loading it into target after applying necessary transformation.• Developed SQL queries to validate the data such as checking duplicates, null values and ensuring correct data aggregation and ETL mapping rules.• Preparing Test Scenarios ,Test Cases for module ,integration and regression testing and executed the test cases in HP-ALM.• Defect Tracking using HP-ALM. Show less I have been working with Accenture as an Oracle Applications Technical Consultant . I have extensive knowledge of Oracle Apps modules like Order Management, Inventory, Purchasing and Oracle Finance. Also, I have proficient programming experience in SQL,PL/SQL. Along with, Good knowledge of Procure to Pay and Order to Cash Cycles.I am a highly motivated, pragmatic professional team player with strong interpersonal and communication skills and coordinating to work and interact in team environment.An effective communicator with excellent relationship management skills Show less

Software Engineer Analyst

Mar 2017 - Mar 2019Associate Software Engineer

Sept 2015 - Mar 2017Associate Software Engineer

Sept 2015 - Sept 2015

Sonata Software

Mar 2019 - Feb 2022Snowflake ||AWS EC2 || Python ||AWS S3 || QuickSightThis project is introduced to increase the efficiency thus focusing on phase out of product. Phase out is a process to put stop on production gradually at different hierarchy level (LOB,LPC,CAD), region ,market by looking into different aspects like demand of product, sales of product, future-forecast, regional decisions etc. All the process was manual causing inefficiency, In simplicity All this process is digitalized thus Automating the process to generate data and make it available on ad hoc basis to business in the form of dashboard thus providing facility to make decision and monitor process.Responsibilities: Closely working with Clint to understanding the new requirements as part of Development along with product owners to get approval on any stories as part of CR’s or Releases. Handling a team of 2 members, Coordinating multiple upstream and downstream team and higher management level. Participated in all phases of research including data collection, data cleaning, and visualizations. Visually plotted data using QuickSight for dashboards and reports. Examined data quality including dealing with duplicate values, replacing missing values and transforming wrong data types into well-structured formats. Employed data visualization using python and QuickSight to find the general patterns among different variables within the dataset Perform detailed analysis of source systems and source system data and modelthat data in QuickSight Design, develop, and test scripts to import data from sourcesystems and test dashboards to meet customer requirements. Use data from AWS S3 for processing and upload data to AWS S3 using KMS security. Deployed Spark Cluster on AWS EC2 using EMR for high efficient data processing Created Password less connection on AWS cluster. Show less Bigdata||Datawarehouse || Hive||MS SQL ||Green Plum-PostgreSQL ||Python || Streamset || Avro || JSON || HDFS || Impala || Postman || Hbase || Oozie || TFS || SQL || Sqoop || kafka || YARN|| jython || NO SQL || Mango db || Pyspark|| Program for internet news and email is the main objective of the program is to identify the golden records from all sources when we loaded to CDI (hdfs) that went through all cleansing , AVS and Survivor ship rules and matching the final outcome of the record is the golden record. With the help of the Golden record, **** Marketing team will be doing business and sending the newsletters or emails or updates of the Organization to the End usersResponsibilities: Created jobs, sub jobs using Spark for data ingestion into Data Lake and processing and loading to marts. Created/customized Spark components and Hive bulk load Worked advanced data aggregation using spark. Extracted the data from Sql into HDFS using Sqoop. Created Sqoop jobs with incremental load to populate Hive External tables. Creating Sqoop scripts to do data refresh for newly generated data. Extensive experience in writing Hive scripts to transform raw data and processing it. Used hive to do transformations, event joins and some pre-aggregations before storing the data onto HDFS. Involved in creating and optimizing Hive tables to store data. Handled both Structured and semi structured data using Hive. Experience in using Json and Avro file formats. Creating Oozie workflow and Coordinator jobs to kick off the jobs on time for data availability. Used Control-M to schedule and trigger these jobs into single workflow. Show less

Lead Digital Engineer

Jan 2021 - Feb 2022Senior System Analyst

Mar 2019 - Jan 2021

Zebra Technologies

Feb 2022 - nowSoftware Engineer.IIBigQuery || BigQuery ML || Data Studio || Elastic Search || Air flow || Python || PostgreSQL ||PySpark || Pub/Sub || Sisense || Dataflow || Dataproc||

Licenses & Certifications

- View certificate

Python for Data Science

IBMSept 2020 - View certificate

Docker Essentials: A Developer Introduction

IBMSept 2020 - View certificate

Data Analysis Using Python

IBMSept 2020 - View certificate

Intro to SQL for Data Science

DataCampJul 2017 - View certificate

Cleaning Data with PySpark

DataCampSept 2020 - View certificate

Machine Learning with Python - Level 1

IBMSept 2020 - View certificate

Big Data with PySpark

DataCampSept 2020

Getting Started with Apache Spark SQL

DatabricksMar 2020- View certificate

Introduction to Data Engineering

DataCampSept 2020 - View certificate

Become a Software Developer

LinkedInOct 2020 - View certificate

Scrum Foundation Professional Certificate

CertiProfOct 2020

Cloud-Native Database

Alibaba CloudSept 2020- View certificate

Spark - Level 1

IBMSept 2020 - View certificate

MSUS Cloud Skills Challenge Champion

MicrosoftFeb 2021 - View certificate

Machine Learning with PySpark

DataCampSept 2020 - View certificate

GSQL101

TigerGraphJun 2021 - View certificate

Hadoop Foundations - Level 1

IBMSept 2020 - View certificate

Artificial Intelligence

AccentureSept 2020 - View certificate

Database Design

DataCampNov 2020 - View certificate

Data Engineering

DataCampSept 2020 - View certificate

Data Visualization Using Python

IBMSept 2020

Volunteer Experience

Fundraising Coordinator

Issued by HelpAge India Associated with Prakash Nayak

Associated with Prakash NayakBlood Donation Camp

Issued by Red Cross Youth on May 2013 Associated with Prakash Nayak

Associated with Prakash Nayak

Languages

- enEnglish

- orOriya

- hiHindi

- beBengali

Recommendations

Marieke regeer

Praktijkondersteuner bij Nidos.Netherlands

Nithin j wilson

Operations Intern at JSW Bengaluru Football ClubBengaluru, Karnataka, India

Imam hasan chowdhury

Assistant Engineer (Design & Project) at CynergyMirpur, Dhaka, Bangladesh

Ansar abdulrahiman

Principal ConsultantSalmiya, Hawalli, Kuwait

Colleen norton

HostessFort Worth, Texas, Amerika Serikat

Lee ward

Director of Award Winning Hayward Architects!Leicester, England, United Kingdom

Malak haji

Human Resources CoordinatorManama, Capital Governorate, Bahrain

Ritwik pattnaik

Software Engineer @ UBSIndia

Priyanka bhatnagar

Project Manager at Halton RegionMississauga, Ontario, Canada

Ashley r.

I wanna be the very best, like no one ever was...Wollongong Area

Kirti zunza

Pharmacy Assistant at DAWSON'S PHARMACY, INC.Coquitlam, British Columbia, Canada

Ugochukwu iho

OSS Medical Laboratory Scientist at Society for Family HealthKaduna, Kaduna State, Nigeria

Lucy eve

Director at Eurasia GroupGreater London, England, United Kingdom

Moacir zancopé junior

ArquitetoSão Paulo, Brazil

Simran kumar gupta

Tech Business Analyst | CSM | CPBA | CPDC | Pega | MS SQL Server | MS Power BIBengaluru, Karnataka, India

Deyse martins de oliveira

Negociador PL (NW GROUP)Sao Paulo, San Pablo, Brasil

Samanth kumar

Business Analyst @ Shell India | ETRM, Business AnalysisBengaluru, Karnataka, India

Hardik soni

Financial Analyst | FRM Level-1 CandidateMumbai, Maharashtra, India.webp)

Ayaka utashiro (歌代 彩花)

MBA Student at Kellogg | Fulbrighter | Forté Fellow | Forté MBA Ambassador | Attorney at Law | Corpo...Tokyo, Japan

Cristóbal calva

Inside Sales Manager CT-GTAT || SCHUNK MéxicoQuerétaro, Mexico

...

Deep Enrich

Deep Enrich